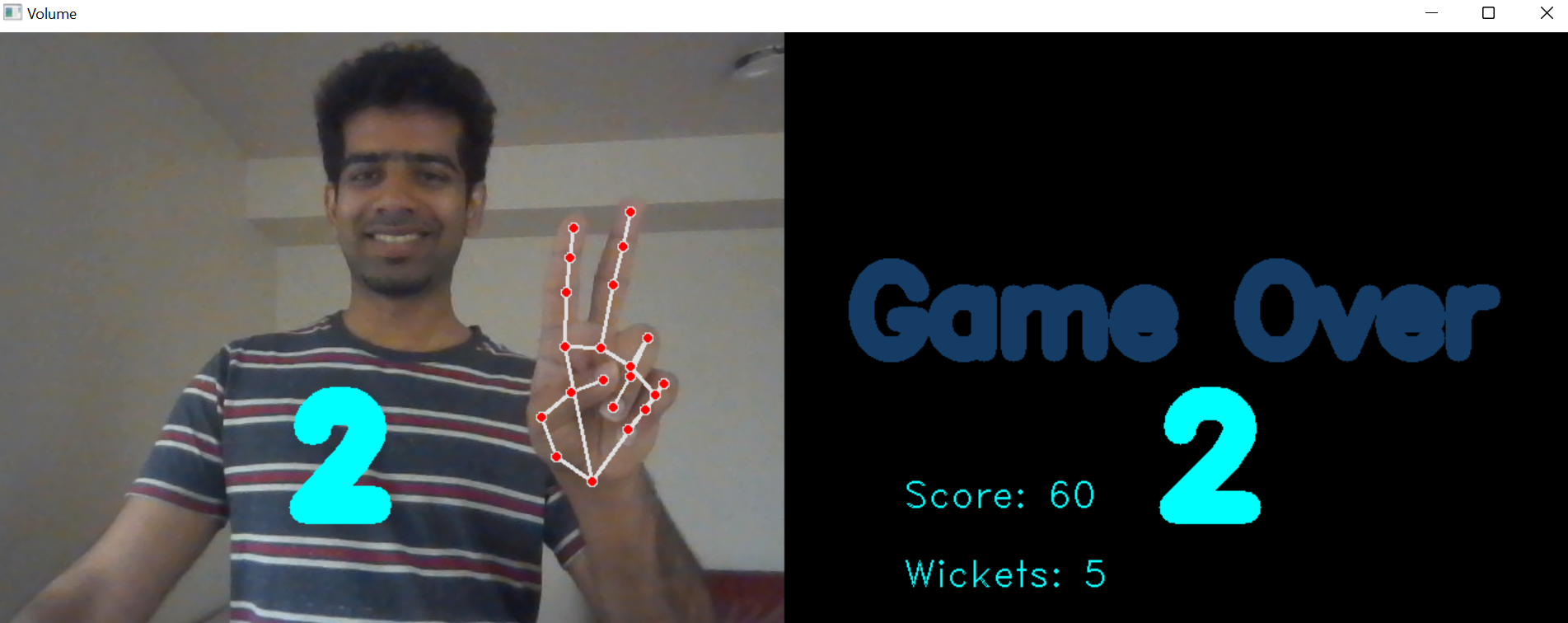

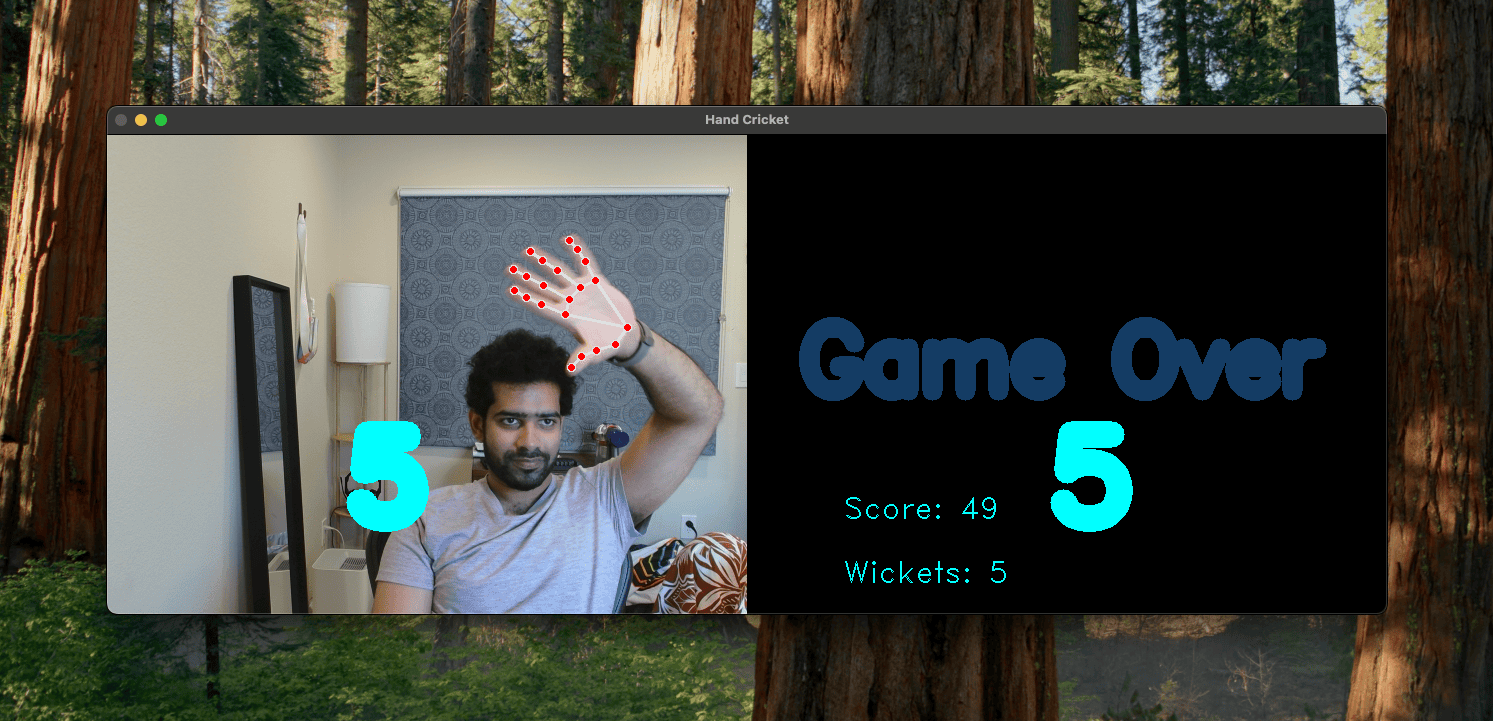

Hand Cricket using OpenCV & MediaPipe

This project is an interactive computer vision application that recreates the classic Indian game of hand cricket using real-time hand gesture recognition. Built using Python, OpenCV, and MediaPipe, it allows a player to play cricket against a computer using only hand gestures captured through a webcam—no keyboard or controller required.

Project Overview

Hand cricket is a simple and popular street/indoor game widely played by children in India. In the traditional version, two players simultaneously show numbers using their fingers. If both numbers match, the batsman is declared out; otherwise, the batsman scores runs equal to the number shown. This project digitizes that experience and brings it into an interactive computer vision setting.

The system uses a webcam feed to detect and interpret hand gestures in real time, converting them into meaningful game actions such as batting runs or bowling outcomes. The core idea is to bridge a culturally familiar game with modern AI-based human-computer interaction.

Core Technologies

1. OpenCV (Computer Vision Pipeline)

OpenCV is used for:

-

Capturing live video from the webcam

-

Processing image frames

-

Rendering overlays such as game state, scores, and UI elements

It acts as the backbone for real-time video processing.

2. MediaPipe Hands (Hand Tracking Model)

The project leverages MediaPipe’s hand tracking solution to detect and track hand landmarks. MediaPipe provides 21 3D hand keypoints per frame, enabling accurate finger detection and gesture interpretation in real time.

This allows the system to:

-

Identify finger positions

-

Count the number of fingers shown

-

Translate gestures into discrete numeric values (e.g., 1, 2, 3, 4, 6 runs)

How It Works

1. Hand Detection and Landmark Extraction

Each frame from the webcam is processed using MediaPipe:

-

A palm detection model identifies the hand region

-

A landmark model extracts 21 keypoints representing finger joints

These landmarks form the basis for gesture recognition.

2. Gesture Recognition Logic (Handcrafted)

Unlike modern deep learning approaches, this project uses rule-based logic:

-

Finger states (up/down) are determined using geometric relationships between landmarks

-

The number of raised fingers is mapped to a cricket score

-

Each gesture corresponds to a run value

This approach required careful manual design of thresholds and conditions, making it computationally lightweight but implementation-heavy.

3. Game Engine

The game logic simulates real hand cricket rules:

-

Batting phase: Player shows a number → system generates a random number

-

Bowling phase: Roles switch after an “out”

-

Out condition: Same number from both sides

-

Scoring: Accumulate runs until out

-

Winning condition: Second innings surpasses target

The system maintains:

-

Score tracking

-

Turn switching

-

Match state (innings, target, winner)

4. Real-Time Interaction Loop

The full pipeline runs continuously:

-

Capture frame

-

Detect hand

-

Extract landmarks

-

Recognize gesture

-

Update game state

-

Render output

This creates a seamless interactive experience with minimal latency.

Key Features

-

Real-time hand gesture recognition via webcam

-

Fully playable hand cricket game without physical input devices

-

Rule-based gesture interpretation (no training required)

-

Lightweight and efficient implementation

-

Interactive UI overlay with score and game status

Challenges & Learnings

This project was developed before the widespread availability of modern foundation models and high-level AI tooling. As a result:

-

Gesture recognition logic was entirely handcrafted, requiring significant experimentation

-

Handling edge cases like partial hand visibility and inconsistent lighting was non-trivial

-

Achieving stable real-time performance required careful optimization

Despite these constraints, the project demonstrates how classical computer vision techniques combined with lightweight ML models can create engaging interactive systems.

Significance

This project sits at the intersection of:

-

Computer vision

-

Human-computer interaction

-

Game design

It highlights how AI can be used not just for automation, but for reimagining familiar human experiences in digital environments. By bringing a culturally rooted game like hand cricket into a vision-based interface, it showcases the potential of intuitive, gesture-driven applications.